Second Image Processing Robotic Arm

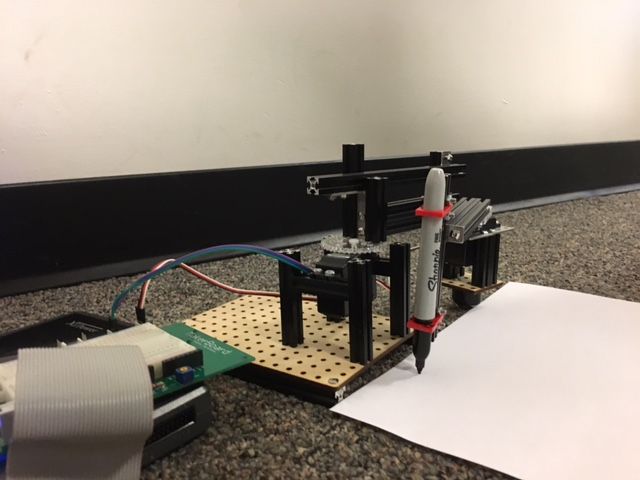

This is essentially a new and improved version of the original Image Processing Arm I built, only with a much improved hardware system, a modified code, and a new way of importing the picture. One of our main issues with the previous arm was that it was fairly unstable, so I completely rebuilt the hardware to stabilize the joint between the two arms. I also used Solidworks to model pieces better fit to hold the sharpie, and laser cut them out so they could be easily attached to the system, as shown below (the red pieces).

We also made a few changes to the software; Rather than trying to find circles of color in the image, and using the array of center points to trace the image, we dilated the image, then eroded it to find the edges, then made a contour plot of all the points on the edges. This gave us way more data to use for tracing the outline of the letter, and resulted in a much clearer final drawing on paper. We also wrote the code using TCP blocks (Transmission Control Protocol) to be able to connect to our professors camera instead of ours. This meant that our robot was programmed to take any letter or other image our professor drew on his whiteboard, and reproduce it on paper for us. The following link to my google drive contains our labVIEW code for the robot, as well as a video of it in action. The pictures below show another angle of the robot, as well as the first letter it was able to draw.